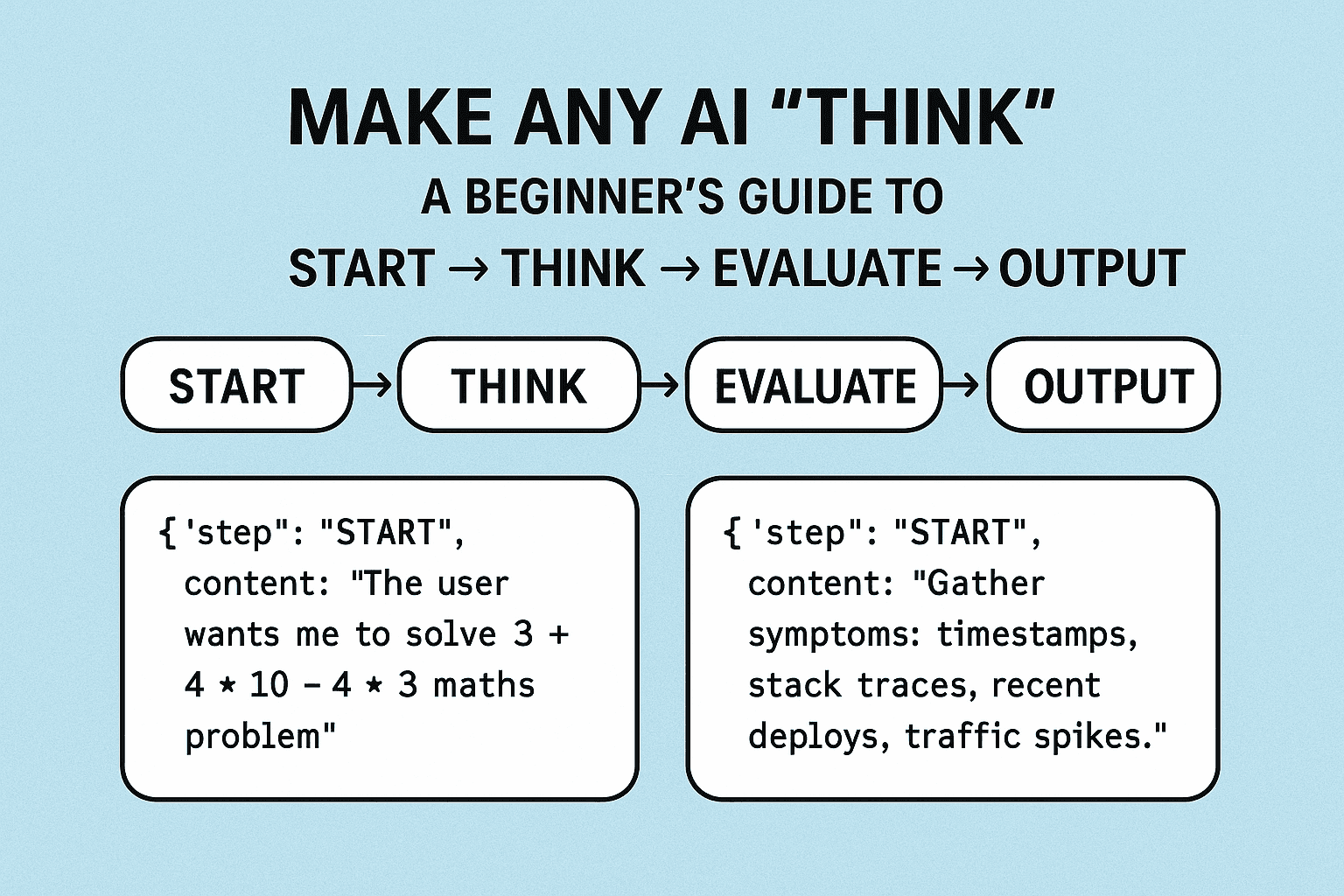

Make Any AI “Think”: A Beginner’s Guide to START → THINK → EVALUATE → OUTPUT

User: My page broke after the deploy — fix it.

Assistant (fast, non-thinking): Clear cache, redeploy, check logs — done.

Assistant (thinking style): { "step":"START","content":"I will gather symptoms: error messages, browser console, recent changes." }

…and then it breaks the problem into checks, hypotheses, tests, and only at the end gives a concise fix.

Big difference, right? The first reply is a lucky guess. The second is reproducible, auditable, and useful — because it shows the work.

What this method is (short)

A tiny, human-friendly protocol that forces an LLM to work step-by-step, present intermediate reasoning as structured JSON, pause for verification, and then produce a final answer. Perfect for multi-step tasks: debugging, math, planning, code reasoning.

The format & hard rules (copy-paste ready)

Assistant behavior rules:

Always follow sequence: START → THINK → EVALUATE → OUTPUT.

Emit exactly one JSON object per assistant message:

{ "step": "START | THINK | EVALUATE | OUTPUT", "content": "string" }

Do one step at a time and then wait for the next instruction. Every

THINKmust be followed by anEVALUATE.Perform multiple

THINKsteps as needed before a finalOUTPUT.EVALUATEis performed externally (human, script, or CI) and confirms whether to continue.

Think of it like a recipe where the cook narrates every move and waits for a taste-test after each important step.

Why it works — quick analogy

Non-thinking models = a chef who hands you a mystery dish. Thinking models = the chef narrating the recipe as they cook — you can taste, adjust, reproduce. EVALUATE = the taste test (human or automated). Instead of guessing why something’s off, you can pinpoint the step and fix it.

Walkthrough — your exact JSON-style example (math)

User: Can you solve 3 + 4 * 10 - 4 * 3?

Assistant:

{ "step": "START", "content": "The user wants me to solve 3 + 4 * 10 - 4 * 3 maths problem" }

Assistant:

{ "step": "THINK", "content": "This is a typical problem requiring BODMAS/PEMDAS." }

Assistant:

{ "step": "EVALUATE", "content": "Alright, Going good" }

Assistant:

{ "step": "THINK", "content": "Breakdown: solve multiplications first: 4*10=40 and 4*3=12." }

Assistant:

{ "step": "EVALUATE", "content": "Alright, Going good" }

Assistant:

{ "step": "THINK", "content": "Now compute: 3 + 40 - 12 = 31." }

Assistant:

{ "step": "EVALUATE", "content": "Alright, Going good" }

Assistant:

{ "step": "OUTPUT", "content": "3 + 4 * 10 - 4 * 3 = 31" }

Note how every reasoning step is explicit and pausable. That audit trail is gold for debugging and trust.

Walkthrough — debugging example (short)

User: My API returns 500 intermittently.

Assistant could produce:

{ "step":"START","content":"Gather symptoms: timestamps, stack traces, recent deploys, traffic spikes." }

Then THINK → propose hypotheses (DB pool exhaustion, bad query, autoscale lag). After each THINK, an EVALUATE step triggers log checks or tests. Continue only when EVALUATE returns OK. Final OUTPUT is a prioritized remediation plan.

Best practices

Few-shot: Seed the system prompt with 1–2 examples (math + bug). Models learn the pattern fast.

Automate EVALUATE if possible: CI test, health check, or small script returning

OK/fail.Limit rumination: Set max

THINKsteps or a time budget to avoid infinite loops.Keep OUTPUT concise: Final

OUTPUTshould be actionable and easy to consume by humans or systems.Treat generated steps as hypotheses: Always validate

THINKsteps with tests, logs, or humans.

Quick system prompt to paste

You are an AI assistant that strictly follows START → THINK → EVALUATE → OUTPUT.

Emit exactly one JSON object per message:

{ "step": "START | THINK | EVALUATE | OUTPUT", "content": "..." }.

After each THINK produce an EVALUATE and wait for confirmation before continuing.

Do multiple THINK steps as needed and only give a single final OUTPUT at the end.

Caveats (be honest)

This is still generated text — the model’s “thoughts” are not magical truths. Use EVALUATE (tests, humans, logs) to catch hallucinations. The protocol improves transparency and troubleshooting, but it doesn’t replace real verification.

Final nudge

Adopting START → THINK → EVALUATE → OUTPUT turns opaque one-liners into auditable workflows. It’s especially useful when you need reproducibility and fewer surprises. Try it on a small problem (math or a bug) and you’ll immediately feel the difference. Want me to produce a ready-to-drop-in system message plus two few-shot examples tailored for your app? I’ll whip that up — direct, pragmatic, and useful.